However, for rest of the month, the traffic is expected to be low. You expect a spike in customer inquiries at the end of March, before tax filing deadline. Let’s assume you are running a chat-bot service for a payroll processing company. In this example, the data processing charges apply to the request and response body, but not to the data transferred to/from Amazon S3. Inference outputs are 1/10 the size of the input data, which are stored back in Amazon S3 in the same Region. The size of each invocation request/response body is 10 KB, and each inference request payload in Amazon S3 is 100 MB. The endpoint processes 1,024 requests per day.

Therefore, you are charged for 2.5 hours of usage per day. In this example, the endpoint maintains an instance count of 1 for 2 hours per day and has a cooldown period of 30 minutes, after which it scales down to an instance count of zero for the rest of the day. The ml.c5.xlarge instance in the endpoint has a 4 GB general-purpose (SSD) storage attached to it. The endpoint is configured to run on 1 ml.c5.xlarge instance and scale down the instance count to zero when not actively processing requests. The model in example #5 is used to run an SageMaker Asynchronous Inference endpoint. For input payloads in Amazon S3, there is no cost for reading input data from Amazon S3 and writing the output data to S3 in the same Region. When not actively processing requests, you can configure auto-scaling to scale the instance count to zero to save on costs. Amazon SageMaker Studio LabĪmazon SageMaker Asynchronous Inference charges you for instances used by your endpoint. You pay only for the underlying compute and storage resources within SageMaker or other AWS services, based on your usage. SageMaker Inference Recommender to get recommendations for the right endpoint configuration.SageMaker JumpStart to easily deploy ML solutions for many use cases. You may incur charges from other AWS Services used in the solution for the underlying API calls made by Amazon SageMaker on your behalf.SageMaker Clarify to better explain your ML models and detect bias.SageMaker Model Monitor to maintain high-quality models.

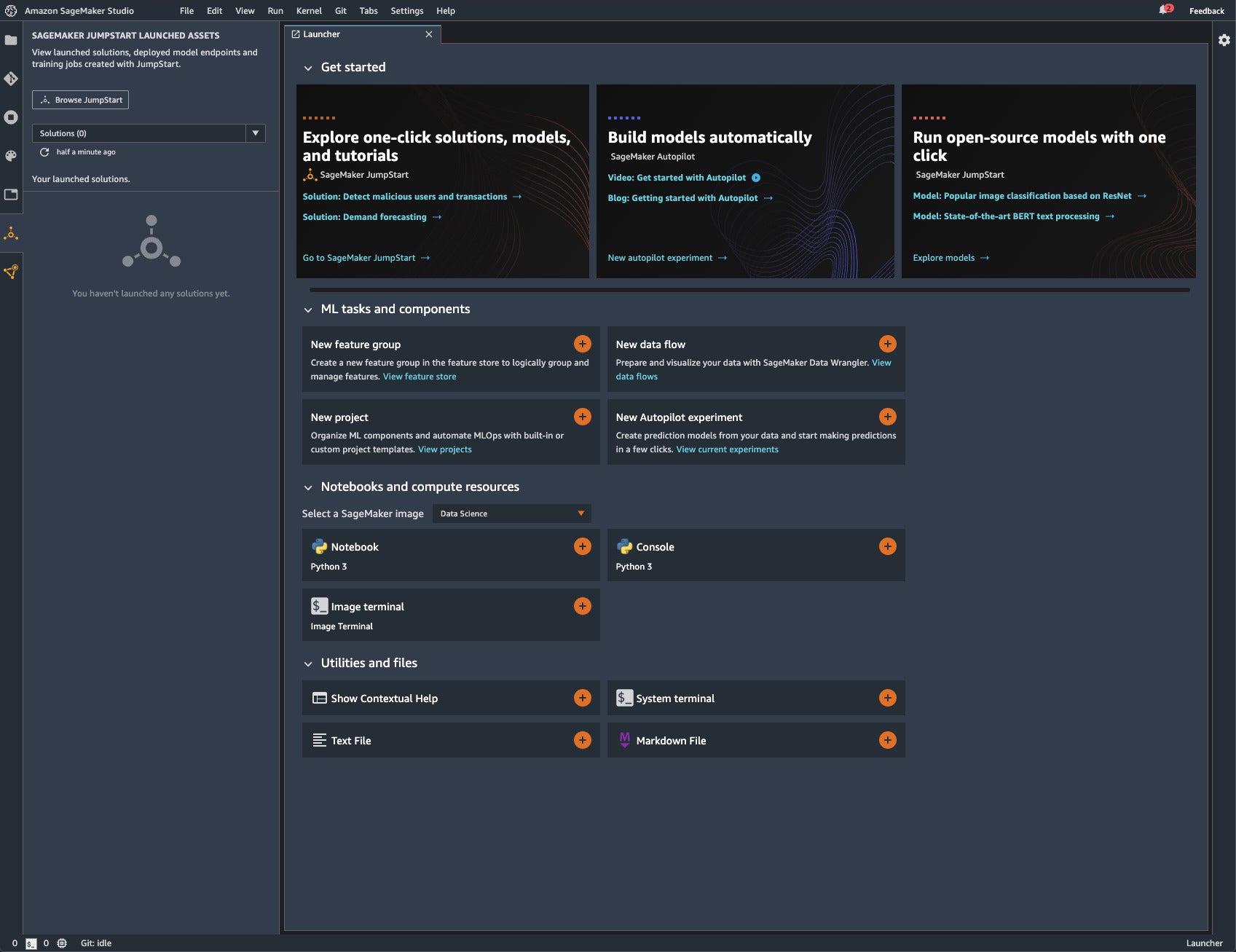

SageMaker Debugger to debug anomalies during training.SageMaker Experiments to organize and track your training jobs and versions.SageMaker Autopilot to automatically create ML models with full visibility.SageMaker Pipelines to automate and manage ML workflows.You can use many services from SageMaker Studio, AWS SDK for Python (Boto3), or AWS CLI, including: Using SageMaker Studio, you pay only for the underlying compute and storage that you use within Studio. SageMaker Studio gives you complete access and visibility into each step required to build, train, and deploy models. You can now access Amazon SageMaker Studio, the first fully integrated development environment (IDE) at no additional charge. Free Tier usage per month for the first 2 monthsĢ50 hours of ml.t3.medium instance on Studio notebooks OR 250 hours of ml.t2 medium instance or ml.t3.medium instance on notebook instancesĢ50 hours of ml.t3.medium instance on RSession app AND free ml.t3.medium instance for RStudioServerPro appġ0 million write units, 10 million read units, 25ĥ0 hours of m4.xlarge or m5.xlarge instancesġ25 hours of m4.xlarge or m5.xlarge instancesġ50,000 seconds of on-demand inference durationġ60 hours/month for session time, and up to 10 model creation requests/month, each with up to 1 million cells/model creation requestįree Tier usage per month for the first 6 monthsġ00,000 metric records ingested per month, 1 million metric records retrieved per month, and 100,000 metric records stored per month

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed